|

After that, the optimal move was Nf3xe5 but it's past the 50 minutes mark I mentioned before ( you can see the engine analysis for an hour and 22 minutes in this screenshot). After 27 minutes, it suggested Ng1 all the way up to 56 minutes.Again, after 24 minutes the best move was Ne3.

After 18 minutes the line changed back to Qc3.

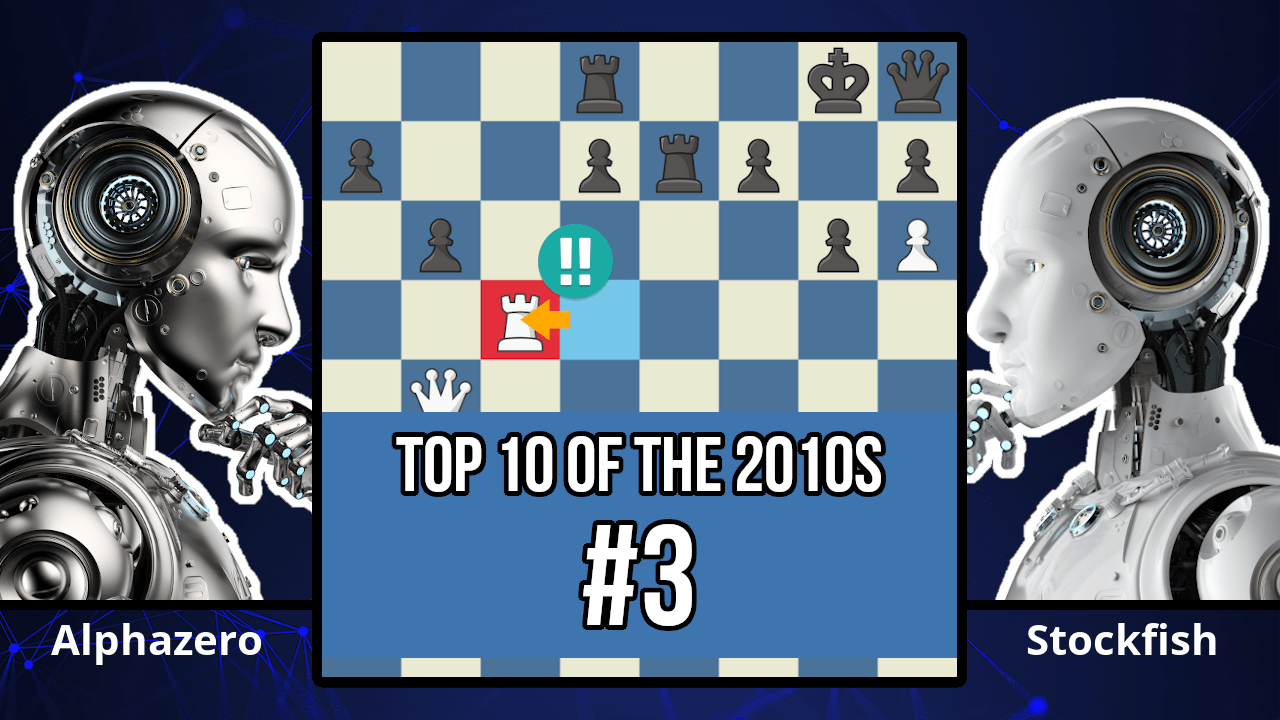

That's good, but I kept it running for a complete 50 minutes in order to reach the computations of the Stockfish in the game that was allowed 1 minute: Stockfish started out suggesting some moves, but after 6 minutes on my computer (corresponds to 7.2 seconds on the Stockfish in the real game) it preferred a4-a5 just as the game went.I ran Stockfish analysis on the above position and I got the following results: So I can simulate the game if I run it for 50 minutes at each move. Let's say I have a computer that runs the Stockfish engine at a speed of 1,400k nodes per second, that's about 50 times lower than the Stockfish in the real game ( In the paper, it says 70,000k n/s). In this position, Stockfish chose to move the pawn on the left side ( a4-a5). In the above case, it probably didn't matter much if Stockfish ran for twice the time because the decision would have been the same, but on the next move it definitely would: Kg1-h1 is still the choice for Stockfish. In the paper, it is said that stockfish calculates 70,000k positions per second and is run for 1 minute per move, that's about 50 times my hardware, so I'll let mine run for 50 minutes. After that, it decides Be3 is better.īut after 5 minutes on my hardware that runs on 1,400k nodes/s it will decide to go with Kh1 as the optimal move. At first, It gives b4 as the optimal move when the engine is running for about a minute.I ran the latest stockfish on the position (at move 11): Here's what I originally said in my reply: Stockfish changes it's decision when it analyses all the possible moves in more depth. Why? Because he ran Stockfish for 15 seconds, and if he had run it for an hour he would have gotten Kg1-h1 as the best move in that position. Yes, it was a passive move and it seems that Stockfish should have played a more developing move. On the other hand, stockfish on his computer showed a more developing move like Be3 (move the dark square bishop), lets look at the position: He said that on the position below (game 1 - move 11) Stockfish played Kg1-h1 (moved its king) which made no sense at all. On the first report that was posted on someone claimed that Stockfish was not playing optimally because he couldn't reproduce the same results using the same Stockfish on his computer. Stockfish's performance is dependent upon both the time limit and the hardware configuration, so just think of when someone doubles the CPU threads, then Stockfish needs less time (not necessarily half) to find the solution than it would with the first configuration. The time limit of 1 min/move (How would this disadvantage Stockfish?) I think it's best if I elaborate on your second point with an example move in the game 1 between AlphaZero and Stockfish which also served to satisfy my curiosity today. I think it's fair to say that AlphaZero was using an 800 pound gorilla of a hardware configuration compared to Stockfishes mouse. A top of the line I7 that you would find in a gaming machine would typically be about 100 GFLOPs (i.e. Note TFLOPS = 1000 billion floating point operations per second.įor comparison Intel's latest most powerful chip is the Core i9 Extreme Edition processor which clocks in at 1 TFLOP. They used 4 TPUs for the games, so a processing power of 180 TFLOPS. GB of High Bandwidth Memory in the second generation design increasedīandwidth to 600 GB/s and performance to 45 TFLOPS.

Google stated theįirst generation TPU design was memory bandwidth limited, and using 16 The second generation TPU was announced in May 2017. This is how Wikipedia describes the second generation TPUs they used.

It used specialized Tensor Processor Units (TPUs) rather than general Central Processing Units (CPUs) as are available commercially. So, AlphaZero used special hardware developed by Google. TPUs Stockfish and Elmo played at their strongest skill level using 64 Second-generation TPUs to train the neural networksĪlphaZero and the previous AlphaGo Zero used a single machine with 4 Starting from randomly initialised parameters, using 5,000įirst-generation TPUs (15) to generate self-play games and 64 Training proceeded for 700,000 steps (mini-batches of size 4,096) This is Google you're talking about! So the answer is obviously "No".įrom the original paper hardware used for initialising and training. That's why I wonder whether there have been any attempts made to

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed